Since 2018, the consortium MLCommons has been operating a type of Olympics for AI coaching. The competitors, known as MLPerf, consists of a set of duties for coaching particular AI models, on predefined datasets, to a sure accuracy. Basically, these duties, known as benchmarks, check how properly a {hardware} and low-level software program configuration is ready as much as prepare a selected AI mannequin.

Twice a 12 months, corporations put collectively their submissions—often, clusters of CPUs and GPUs and software program optimized for them—and compete to see whose submission can prepare the fashions quickest.

There is no such thing as a query that since MLPerf’s inception, the cutting-edge {hardware} for AI coaching has improved dramatically. Over time, Nvidia has released four new generations of GPUs which have since change into the business customary (the newest, Nvidia’s Blackwell GPU, is just not but customary however rising in recognition). The businesses competing in MLPerf have additionally been utilizing bigger clusters of GPUs to deal with the coaching duties.

Nonetheless, the MLPerf benchmarks have additionally gotten harder. And this elevated rigor is by design—the benchmarks try to maintain tempo with the business, says David Kanter, head of MLPerf. “The benchmarks are supposed to be consultant,” he says.

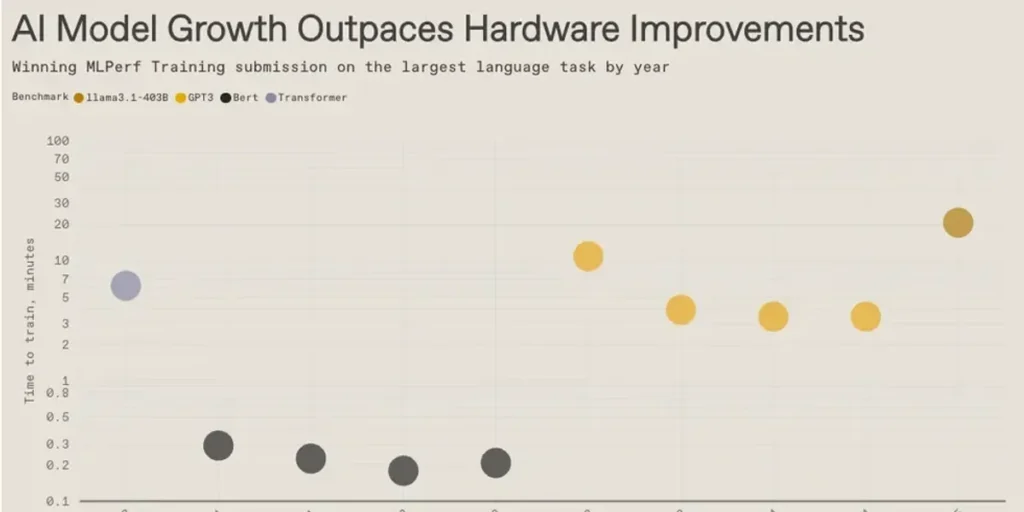

Intriguingly, the information present that the large language models and their precursors have been growing in measurement sooner than the {hardware} has saved up. So every time a brand new benchmark is launched, the quickest coaching time will get longer. Then, {hardware} enhancements regularly convey the execution time down, solely to get thwarted once more by the following benchmark. Then the cycle repeats itself.

This text seems within the November 2025 print problem.

From Your Website Articles

Associated Articles Across the Net