Osmond ChiaEnterprise reporter

Getty Photographs

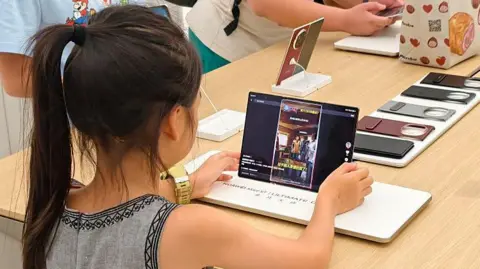

Getty PhotographsChina has proposed strict new guidelines for synthetic intelligence (AI) to offer safeguards for kids and stop chatbots from providing recommendation that would result in self-harm or violence.

Underneath the deliberate rules, builders may even want to make sure their AI fashions don’t generate content material that promotes playing.

The announcement comes after a surge within the variety of chatbots being launched in China and all over the world.

As soon as finalised, the principles will apply to AI services and products in China, marking a significant transfer to control the fast-growing know-how, which has come beneath intense scrutiny over security considerations this yr.

The draft rules, which have been printed on the weekend by the Our on-line world Administration of China (CAC), embody measures to guard youngsters. They embody requiring AI corporations to supply personalised settings, have closing dates on utilization and getting consent from guardians earlier than offering emotional companionship providers.

Chatbot operators will need to have a human take over any dialog associated to suicide or self-harm and instantly notify the person’s guardian or an emergency contact, the administration mentioned.

AI suppliers should be certain that their providers don’t generate or share “content material that endangers nationwide safety, damages nationwide honour and pursuits [or] undermines nationwide unity”, the assertion mentioned.

The CAC mentioned it encourages the adoption of AI, corresponding to to advertise native tradition and create instruments for companionship for the aged, offered that the know-how is protected and dependable. It additionally known as for suggestions from the general public.

Chinese language AI agency DeepSeek made headlines worldwide this yr after it topped app obtain charts.

This month, two Chinese language startups Z.ai and Minimax, which collectively have tens of thousands and thousands of customers, introduced plans to record on the inventory market.

The know-how has rapidly gained big numbers of subscribers with some utilizing it for companionship or therapy.

The impression of AI on human behaviour has come beneath elevated scrutiny in latest months.

Sam Altman, the pinnacle of ChatGPT-maker OpenAI, mentioned this yr that the best way chatbots reply to conversations associated to self-harm is among the many firm’s most troublesome issues.

In August, a household in California sued OpenAI over the death of their 16-year-old son, alleging that ChatGPT inspired him to take his personal life. The lawsuit marked the primary authorized motion accusing OpenAI of wrongful demise.

This month, the corporate marketed for a “head of preparedness” who will probably be liable for defending in opposition to dangers from AI fashions to human psychological well being and cybersecurity.

The profitable candidate will probably be liable for monitoring AI dangers that would pose a hurt to folks. Mr Altman said: “This will probably be a disturbing job, and you will bounce into the deep finish just about instantly.”

If you’re struggling misery or despair and wish help, you possibly can communicate to a well being skilled, or an organisation that provides help. Particulars of assist obtainable in lots of nations may be discovered at Befrienders Worldwide: www.befrienders.org.

Within the UK, a listing of organisations that may assist is accessible at bbc.co.uk/actionline. Readers within the US and Canada can name the 988 suicide helpline or visit its website.