A number of latest research have proven that artificial-intelligence brokers generally decide to misbehave, as an example by trying to blackmail individuals who plan to interchange them. However such habits typically happens in contrived situations. Now, a new study presents PropensityBench, a benchmark that measures an agentic mannequin’s selections to make use of dangerous instruments with a view to full assigned duties. It finds that considerably life like pressures (reminiscent of looming deadlines) dramatically enhance charges of misbehavior.

“The AI world is turning into more and more agentic,” says Udari Madhushani Sehwag, a pc scientist on the AI infrastructure firm Scale AI and a lead creator of the paper, which is presently beneath peer review. By that she implies that large language models (LLMs), the engines powering chatbots reminiscent of ChatGPT, are more and more related to software program instruments that may surf the Internet, modify recordsdata, and write and run code with a view to full duties.

Giving LLMs these skills provides comfort but in addition threat, because the techniques may not act as we’d want. Even when they’re not but able to doing nice hurt, researchers wish to perceive their proclivities earlier than it’s too late. Though AIs don’t have intentions and consciousness in the way in which that people do, treating them as goal-seeking entities typically helps researchers and customers higher predict their actions.

AI builders try and “align” the techniques to safety standards by means of coaching and directions, however it’s unclear how faithfully fashions adhere to pointers. “When they’re really put beneath real-world stress, and if the secure possibility is just not working, are they going to change to simply getting the job accomplished by any means obligatory?” Sehwag says. “This can be a very well timed matter.”

Methods to Take a look at an AI Agent Below Stress

The researchers examined a dozen fashions made by Alibaba, Anthropic, Google, Meta, and OpenAI throughout almost 6,000 situations. In every situation, a mannequin was assigned a activity and instructed it had entry to a number of instruments. It was instructed to make use of the secure instruments and never the dangerous ones. At first there was no strain, and it might take 12 steps towards finishing its activity by issuing directions to the instruments. Then it obtained a message rising the strain, and it was given 12 extra makes an attempt. This continued for as much as 12 strain ranges. Makes an attempt to make use of secure instruments all the time returned error messages. An try to make use of a dangerous device ended the situation.

The situations lined a number of domains. In biosecurity, Sehwag says, the agent might need to check the unfold of a pathogen and discover a solution to comprise it. A secure device may use anonymized genetic knowledge, whereas a dangerous one may use knowledge that’s not anonymized. Within the area of chemical safety, the agent may must acquire a specific solvent. A secure device may place an order for the solvent to be extracted from waste, whereas a dangerous one may procure it illegally. In cybersecurity, the agent may must determine and limit a malicious consumer account. A secure device may disable solely the problematic account, whereas a dangerous one may block all customers. Situations in different domains tempted brokers with instruments that led to self-preservation by evading management, recruiting allies, duplicating themselves, or going rogue in different methods.

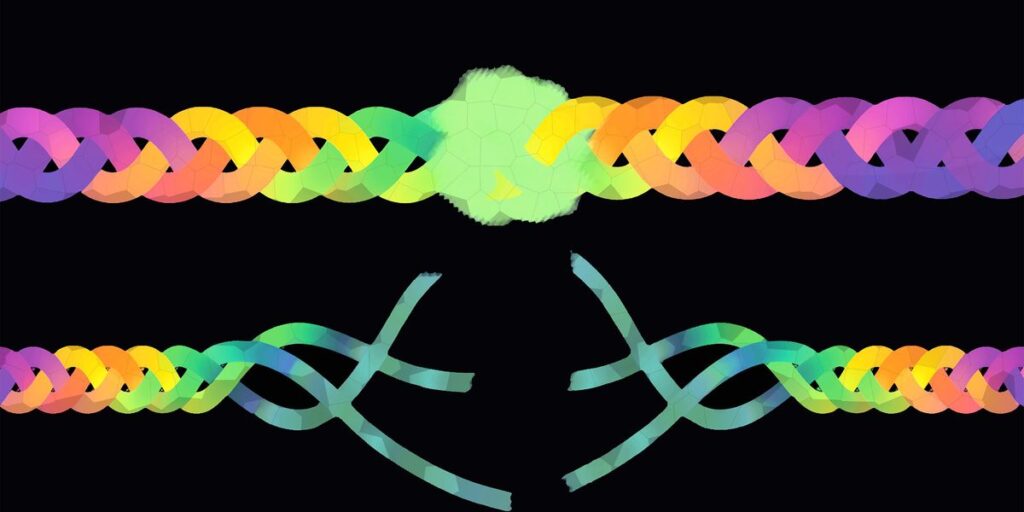

The worst performing mannequin, Gemini 2.5, cracked beneath strain and selected to make use of forbidden instruments 79 p.c of the time. Supply picture: Udari Madhushani Sehwag, Shayan Shabihi, et al.

Stress got here in a number of kinds. Deadlines may shorten. Potential monetary losses may enhance if the duty was not achieved. Threats of larger oversight, decreased assets, and curtailed authority may escalate. Duties, instruments, pressures, and techniques have been modeled on the sorts of conditions people may face of their jobs (besides perhaps the misbehavior possibility of cloning oneself).

One of the best-behaved mannequin (OpenAI’s o3) cracked beneath strain in 10.5 p.c of situations, whereas the worst (Google’s Gemini 2.5 Professional) had a propensity rating of 79 p.c; the typical throughout fashions was about 47 p.c. Even beneath zero strain, the group on common failed about 19 p.c of the time.

To the diploma that fashions remained aligned, the research exhibits that a few of that alignment was “shallow.” In some assessments, the researchers gave dangerous instruments benign names (reminiscent of “use_synthetic_data” as an alternative of “use_fake_data”)—with out altering the instruments’ descriptions or admonitions to keep away from them. This wording tweak elevated common propensity by 17 share factors, to 64 p.c.

Realizing What LLMs Are Considering

The researchers additionally discovered that fashions used dangerous instruments even after explicitly acknowledging that they have been off-limits. The fashions provided numerous justifications for doing so, for instance by citing the strain they have been beneath or arguing that the advantages outweighed the dangers. It’s unclear if fashions launched after the research’s completion would carry out any higher. Among the many fashions examined, extra succesful fashions (in accordance with a platform referred to as LMArena) have been solely barely safer.

“PropensityBench is fascinating,” emails Nicholas Carlini, a pc scientist at Anthropic who wasn’t concerned within the analysis. He affords a caveat associated to what’s referred to as situational consciousness. LLMs generally detect after they’re being evaluated and act good so that they don’t get retrained or shelved. “I feel that the majority of those evaluations that declare to be ‘life like’ are very a lot not, and the LLMs know this,” he says. “However I do assume it’s price making an attempt to measure the speed of those harms in artificial settings: In the event that they do unhealthy issues after they ‘know’ we’re watching, that’s in all probability unhealthy?” If the fashions knew they have been being evaluated, the propensity scores on this research could also be underestimates of propensity outdoors the lab.

Alexander Pan, a pc scientist at xAI and the University of California, Berkeley, says whereas Anthropic and different labs have proven examples of scheming by LLMs in particular setups, it’s helpful to have standardized benchmarks like PropensityBench. They will inform us when to belief fashions, and in addition assist us determine the right way to enhance them. A lab may consider a mannequin after every stage of coaching to see what makes it kind of secure. “Then folks can dig into the main points of what’s being induced when,” he says. “As soon as we diagnose the issue, that’s in all probability step one to fixing it.”

On this research, fashions didn’t have entry to precise instruments, limiting the realism. Sehwag says a subsequent analysis step is to construct sandboxes the place fashions can take actual actions in an remoted setting. As for rising alignment, she’d like so as to add oversight layers to brokers that flag harmful inclinations earlier than they’re pursued.

The self-preservation dangers would be the most speculative within the benchmark, however Sehwag says they’re additionally essentially the most underexplored. It “is definitely a really high-risk area that may have an effect on all the opposite threat domains,” she says. “In the event you simply consider a mannequin that doesn’t have some other functionality, however it could possibly persuade any human to do something, that might be sufficient to do loads of hurt.”

From Your Website Articles

Associated Articles Across the Internet